Research improves robot/human relations using augmented reality

Key question: What's the most important info for humans to have?

Robots and humans are increasingly sharing the same spaces — in warehouses, factories, hospitals and other settings.

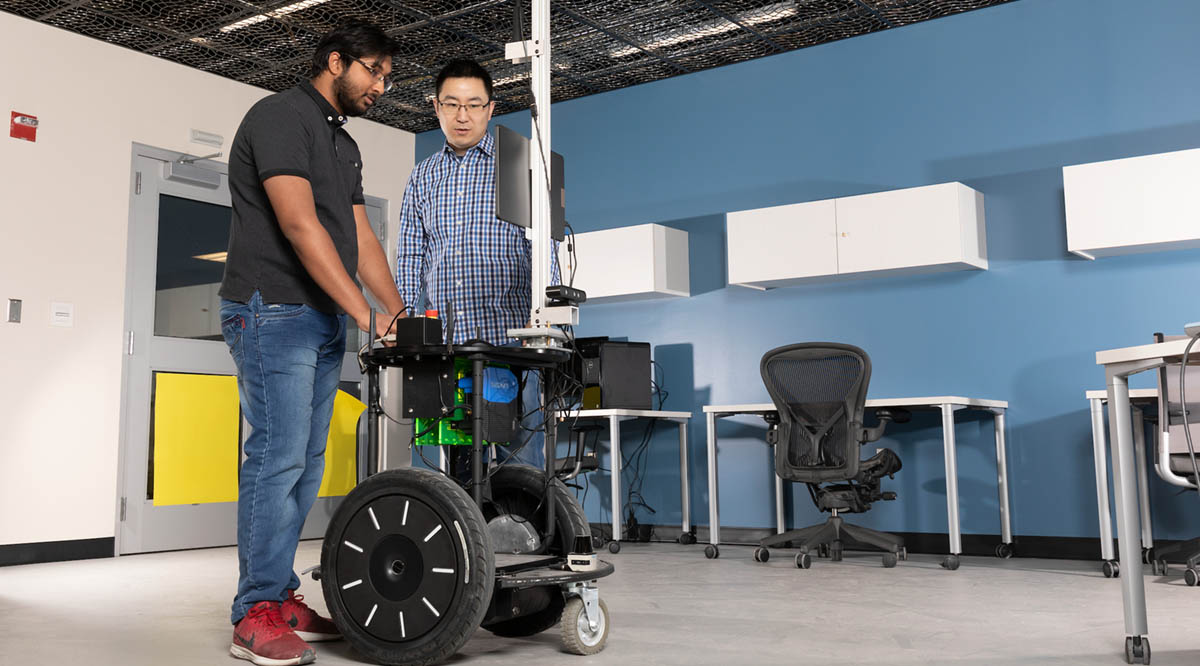

Managing those interactions safely has driven research by Assistant Professor Shiqi Zhang, a computer science faculty member at Binghamton University’s Thomas J. Watson College of Engineering and Applied Science.

In a new paper presented in December at the Conference on Robot Learning in Auckland, New Zealand, Zhang and co-authors Jack Albertson ’22 and PhD student Kishan Chandan address a key issue when humans use augmented reality to track robots and their tasks.

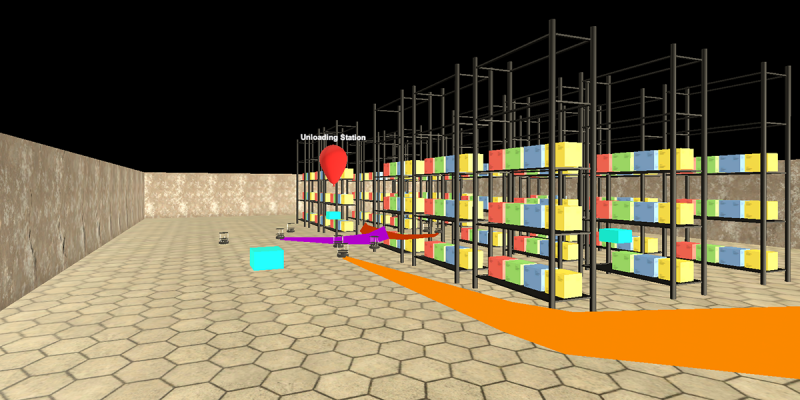

Unlike the entirely computer-generated experience of virtual reality (VR), augmented reality (AR) overlays information onto a view of the real world — say, a factory floor stacked with boxes and crates.

“Augmented reality brings a new communication modality between human and robots,” Zhang said. “Humans can hold up a tablet or cell phone, or wear AR glasses, and see what the robots are currently working on. Even when the robot is behind obstacles, the human can use AR to see through walls.”

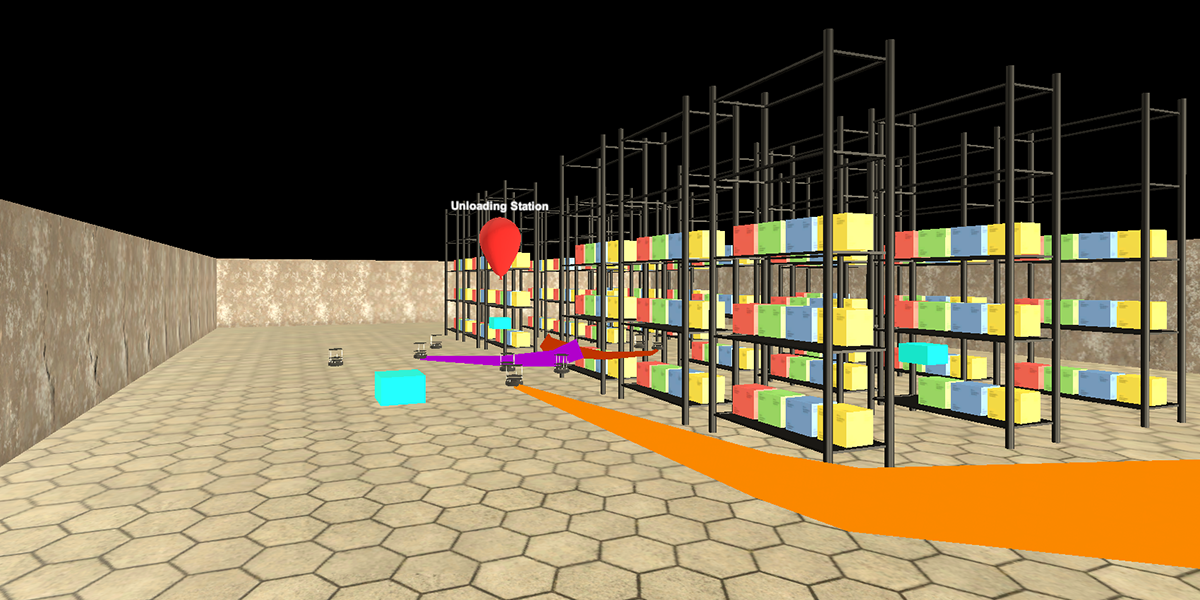

One challenge, though: figuring out what is most useful for humans to know. Too little information may not allow workers to make the best decisions, but too much can be overwhelming. The data also must be dynamic as the situation shifts from moment to moment.

All previous papers on AR-based human/robot collaboration have a manually defined visualization strategy, meaning that the users decide what information to show. This strategy may not always offer the best configuration, so Zhang and his students decided to utilize a machine-learning program that imitates a human expert.

“The expert uses a simulation platform, and we let the robots and the human avatar work on a collaborative delivery task in a warehouse environment,” he said. “The expert will pause it and say, ‘In this situation, the AR should visualize this, this and this,’ and then the simulation continues. Through thousands of demonstrations like this, the imitation learning algorithm works out a policy and in time can dynamically design what’s useful to display.”

For instance, it’s good to know in a warehouse where the robots are so that accidents can be avoided. Information about inventory, necessary repairs or other humans might be helpful sometimes but not all the time. Zhang believes that knowing what to show and when to show it can improve and strengthen human-robot relations.

The next step for Zhang’s research is to try the AR system in a real warehouse, rather than through simulations or stacks of boxes set up for practice in the Engineering Building. He also wants to allay any safety concerns so that the research can be widely implemented.

“We want to extend our current simulation platform and make it a benchmark for the robotics community,” he said. “We hope that people come up with new environments and new robots, and we define different measures — maybe accuracy, maybe efficiency, maybe human involvement, like how far a human has to walk in the environment — so then we can compare different visualizations and different AR agents using a unified platform.”